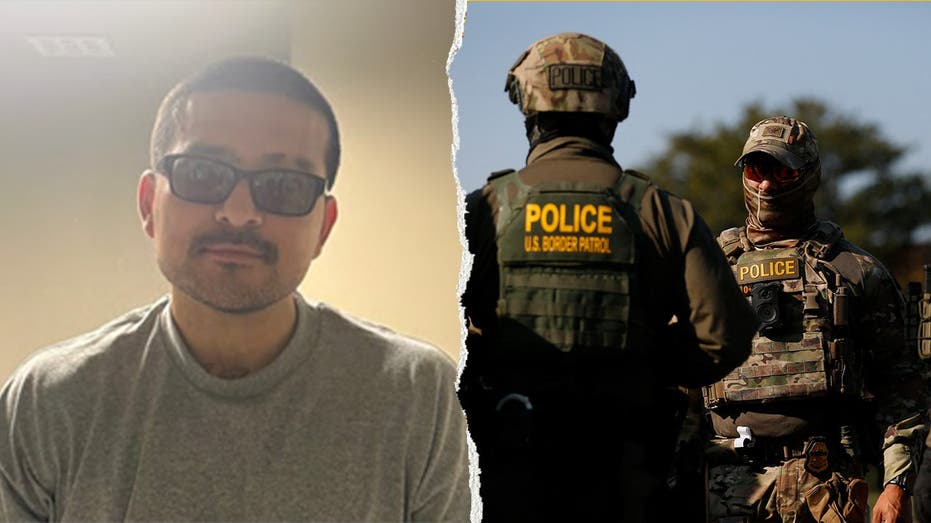

Angela Lipps, a 50-year-old grandmother of five from Tennessee, endured over five months in custody for a crime she did not commit. Her ordeal began when U.S. Marshals arrested her in July 2025, based on a facial recognition technology match that erroneously connected her to a bank fraud case in North Dakota. This alarming incident underscores the critical need for scrutiny and human oversight when deploying advanced AI tools in the justice system.

A Case of Mistaken Identity Across States

Lipps, who asserts she has never traveled to North Dakota, was apprehended despite being more than a thousand miles from the alleged crime scene. The bank fraud case, which originated in Fargo and West Fargo, involved a suspect reportedly using a false military ID. Investigators utilized facial recognition software to compare blurry surveillance images from the bank with Lipps’ driver’s license photos and social media profiles. Her defense attorney, Jay Greenwood, argues that the resulting match was insufficient and that the case should never have escalated to her prolonged detention. She was eventually released around Christmas Eve when bank records conclusively proved her presence in Tennessee during the time of the alleged fraud.

Examining the Limitations of AI Surveillance

The incident highlights significant concerns regarding the reliability of AI-driven surveillance tools. Fargo Police Chief Dave Zibolski previously described their facial recognition tool as ‘an AI function through the North Dakota State Intelligence Center.’ However, as Greenwood explained on the CyberGuy Report podcast, such technology must be treated as a lead, not definitive proof. He emphasized the poor quality of the initial surveillance footage, noting ‘terribly placed security cameras’ that yielded unclear images. This situation demonstrates that while facial recognition technology can be a useful investigative aid, its outputs demand rigorous human verification to prevent severe miscarriages of justice. Over-reliance on imperfect algorithms without proper human review poses a substantial threat to individual liberties.

“This was a case that never should have gone this far.”

— Attorney Jay Greenwood

Safeguarding Due Process in the Digital Age

This unfortunate case involving Angela Lipps serves as a stark reminder of the potential for advanced technologies to infringe upon fundamental rights if not implemented with extreme caution. For a conservative society that values individual freedom and limited government overreach, the responsible deployment of AI in law enforcement is paramount.

- Accuracy is Non-Negotiable: The precision of facial recognition technology must be consistently validated before it leads to arrests.

- Human Oversight is Essential: Automated systems should augment, not replace, human judgment and investigative rigor.

- Protecting the Innocent: Robust safeguards are necessary to prevent wrongful detentions and protect citizens from being unjustly accused by flawed algorithms.

This incident underscores the imperative for clear guidelines and accountability mechanisms to ensure that technological advancements enhance, rather than undermine, the principles of justice and due process.

The experience of Angela Lipps demands a critical re-evaluation of how law enforcement agencies integrate sophisticated AI tools. While technology offers promising avenues for crime fighting, the pursuit of efficiency must never compromise the bedrock principles of justice, individual liberty, and thorough investigation. Policymakers and law enforcement leaders must collaborate to establish frameworks that ensure such powerful tools are used responsibly, ethically, and with an unwavering commitment to protecting the rights of every citizen.