In a significant move toward responsible AI deployment, Meta has rolled out its first major safety update for its AI chatbots, integrated across Facebook, Instagram, and WhatsApp. This update marks a pivotal milestone in the technology giant’s ongoing efforts to mitigate risks associated with AI interactions at scale. Coming on the heels of recent regulatory pressures and heightened public scrutiny over misinformation and harmful content, this development underscores the urgent need for robust safety protocols in AI systems. As AI continues to embed itself into daily digital interactions, the imbalance between innovation and safety becomes a focal point for industry leaders, investors, and policymakers alike.

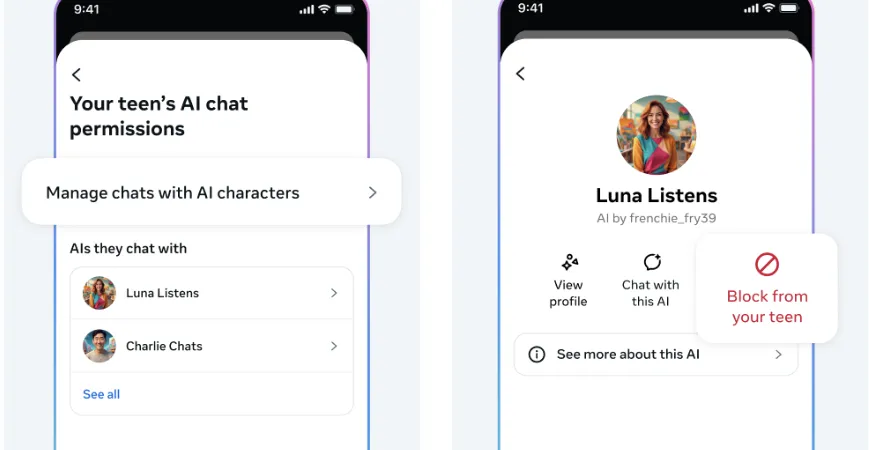

The timing of Meta’s safety enhancements coincides with broader industry trends emphasizing responsible AI development. Notably, the company’s move follows recent policy shifts targeting teen safety on social platforms, including Instagram’s new restrictions designed to emulate PG-13 standards—an effort to address mounting concerns over youth exposure to unsuitable content. Analysts from Gartner and MIT urge tech firms to prioritize transparency and accountability as AI tools become more sophisticated and pervasive. Meta’s actions reflect a recognition that disruption alone will no longer suffice; sustainable innovation demands built-in safeguards without stifling user engagement or technological advancement.

This evolution is not just about user safety. Enhanced safety protocols could redefine business models in the digital landscape. Companies that invest in AI safety capabilities position themselves as industry leaders, gaining a competitive edge through increased trust and reduced liability. Yet, the path forward is fraught with challenges: balancing innovation with regulation, avoiding censorship backlash, and maintaining a seamless user experience.

- Potential for increased regulatory scrutiny

- Risk of reputational damage from safety lapses

- Opportunities for monetization through safer AI products

The implications are clear: the era of unrestrained AI experimentation is giving way to a more disciplined, safety-conscious phase of development. Visionaries like Elon Musk and innovations from institutions such as MIT emphasize that the future of AI hinges on embedding ethical considerations into core algorithms. For investors and entrepreneurs, this shift signals the need to leverage emerging safety standards as a strategic advantage rather than an obstacle. As industry giants race to refine artificial intelligence, the pressure to deliver disruptive yet responsible solutions will intensify—pushing the frontier toward an AI-enabled future that balances progress with prudence. The question now remains: how swiftly and effectively will organizations adapt to this new paradigm? The answer will likely determine their position in the next wave of digital innovation.