Social Media’s Hidden Toll: A Growing Crisis for Youth and Society

The digital age has transformed the way communities, families, and institutions interact with the youngest members of society. While social media platforms like Instagram offer unprecedented opportunities for connection, they also pose profound risks that are increasingly difficult to ignore. According to Ged Flynn, chief executive of the charity Papyrus Prevention of Young Suicide, despite recent statements by Meta praising their efforts to address harmful content, the core issue remains unaddressed: young people are continuously drawn into a dark and potentially destructive online environment. This concern strikes at the heart of our society, highlighting the pressing need for a critical reevaluation of how digital spaces influence mental health, social cohesion, and educational development.

At the intersection of social issues and technological advancement, our families and communities find themselves navigating a complex landscape. Sociologists have long debated the impact of digital culture on interpersonal relationships. Today, an increasing body of evidence suggests that the unregulated exposure to harmful online content can deepen feelings of isolation, depression, and anxiety among youth. This phenomenon strains families by complicating their roles as moral guides and emotional anchors, especially when children encounter damaging influences beyond parental oversight. Schools and educators, meanwhile, are grappling with a new reality in which students are affected by social media-driven pressures—ranging from cyberbullying to distorted standards of beauty and success—corroding the foundational values of self-worth and resilience.

Historians and social commentators have observed that society’s cultural shifts—particularly the erosion of local community bonds and shared moral frameworks—have created a fertile ground for the proliferation of online dangers. As social cohesion weakens, digital platforms often serve as both refuge and threat, complicating the social fabric that binds generations. According to social critic Douglas Murray, the unchecked dominance of these platforms is fostering a culture of superficiality and detachment, which hampers community-building efforts and perpetuates social fragmentation. These issues extend into our institutions, where mental health services are overwhelmed and resources are stretched thin, leaving vulnerable youth without adequate support in times of crisis.

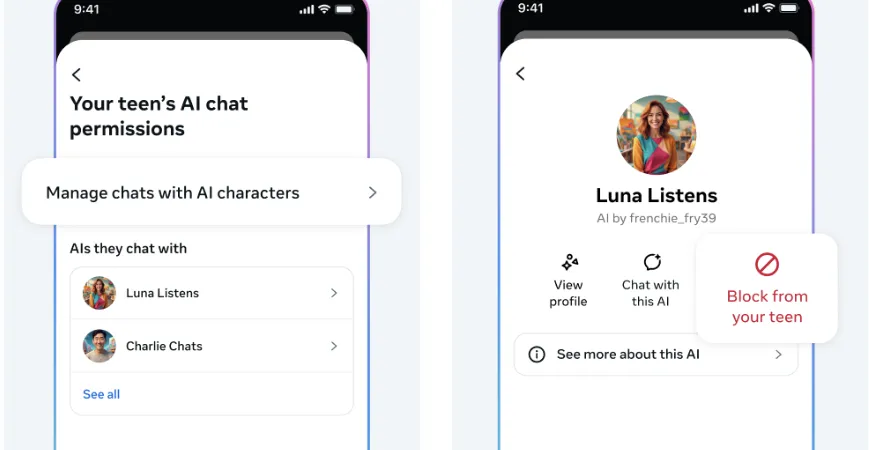

- Despite efforts by corporations to implement safety measures, children and teenagers remain exposed to harmful content that can influence their development negatively

- The rise in youth mental health issues, including depression and suicide rates, correlates strongly with increased social media usage

- Parents, teachers, and community leaders are calling for more stringent regulations and educational programs to counteract the digital threats

- Proposed solutions include fostering digital literacy from an early age, promoting offline community engagement, and strengthening mental health support systems

The challenge today lies in balancing technological innovation with ethical responsibility. It is undeniable that social platforms have the power to build communities and spread knowledge; however, as Flynn indicates, they also neglect the deeper societal issues—namely, how their unchecked growth is contributing to a crisis of mental health among our youth. To restore stability and hope within families and communities, a societal shift is required—one that emphasizes personal responsibility, moral education, and robust community networks. Education systems must adapt to teach young people resilience and discernment in the digital age, while families need practical support to nurture healthy online habits.