Disruptive Social Media Campaign Ushers in New Challenges for Educational Privacy and Political Discourse

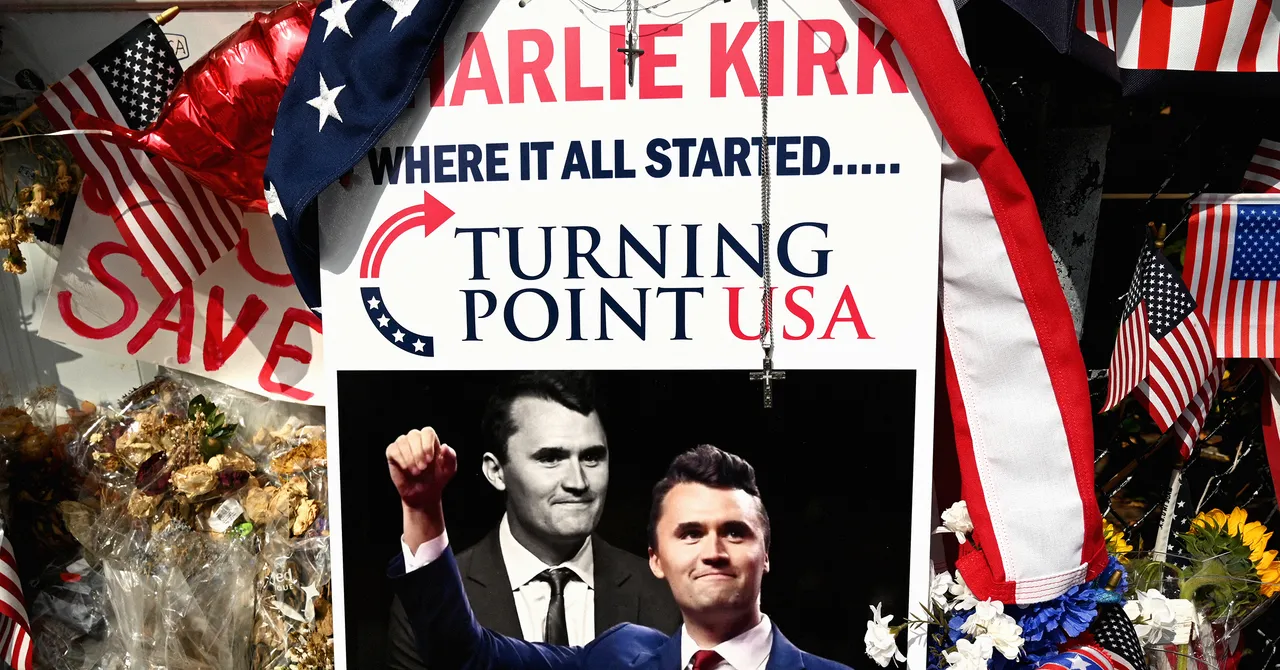

In a stark illustration of the rapid evolution of information warfare, a recent incident involving a high school in Arizona underscores the profound business implications and societal disruption driven by social media’s power to amplify misinformation. The controversy originated when Turning Point USA (TPUSA) spokesperson Charlie Kirk was falsely associated with an innocent Halloween costume worn by teachers, sparking viral outrage. The incident exemplifies how disruptive platforms like X (formerly Twitter) have become conduits for rapid-spread misinformation that can threaten personal safety and reputation on an unprecedented scale.

The incident reveals a pivotal challenge confronting educators and businesses: the ability of malicious actors to weaponize social media for mass psychological operations that threaten privacy, safety, and trust. In this case, an image of teachers in bloodied T-shirts was wrongly interpreted, leading to doxxing, targeted online harassment, and even death threats—an unsettling reminder that the digital landscape’s regulatory and ethical frameworks are lagging far behind technological capabilities. The impact extends beyond individual rights, striking at the core of institutional stability and public confidence in grassroots institutions like education systems.

The incident also signals a burgeoning market for advanced content verification technologies, with industry leaders like Gartner emphasizing that the future of digital trust hinges on automated fact-checking and AI-enabled content moderation. These solutions are critical for preventing similar disruptions at scale, as disinformation campaigns grow more sophisticated. For instance, AI-based image analysis and network tracing mechanics could be employed to preempt false narratives, but such innovations require significant investment and legal safeguards, given the privacy concerns involved.

- Emerging tools are capable of identifying manipulated images and videos quickly

- Automated alerts can notify stakeholders of potential misinformation spikes

- Legal and ethical frameworks remain underdeveloped, risking misuse or overreach

Furthermore, the incident underscores the necessity for businesses, educational institutions, and policymakers to reevaluate their engagement with social media. The disruption also presents an opportunity: those who develop and implement cutting-edge verification and safety technologies could become essential partners in safeguarding digital spaces. Pioneering entities like MIT’s Media Lab are exploring such solutions, recognizing that true innovation in this realm is crucial for maintaining integrity in digital communication. As these technologies mature, they could serve as the foundation for a new era where truth prevails over misinformation, transforming the social media landscape into a more resilient, trustworthy environment.

Looking ahead, this incident serves as a clarion call for all stakeholders to urgently invest in disruption-resistant technology and foster a culture of digital responsibility. Rapid technological advancements—ranging from blockchain-based verification systems to AI-driven content analysis—are poised to redefine how truth is maintained in an age overwhelmed by data. The coming decade is critical: failing to adapt could mean allowing malicious actors to shape perceptions, destabilize institutions, and influence societal outcomes. As Elon Musk and Peter Thiel have often emphasized, the future belongs to those pioneering disruptive, innovative solutions that can turn the tide against digital chaos and misinformation. Strategic foresight and swift technological deployment will determine who leads this new digital frontier—those who act now will shape the foundations of a more secure, transparent digital world.