Meta Faces Landmark Legal Battles: Disruption at the Crossroads of Technology and Society

In what could be a watershed moment for the tech industry, Meta is currently embroiled in a series of high-profile lawsuits that threaten to reshape the landscape of social media accountability. The state of New Mexico has brought a lawsuit against the social media giant, alleging that Meta failed to protect minors from exploitation and designed platforms that fostered harmful environments. This case signals a broader shift in regulatory attitudes towards disruption, innovation, and corporate responsibility within the digital ecosystem. As Meta defies attempts to settle, the proceedings could unveil internal practices that have prioritized engagement metrics over user safety, drawing public and governmental scrutiny centered on the profound societal impact of social media’s business models.

Adding further to Meta’s legal challenges is the simultaneous trial in California, the nation’s first legal probe into social media addiction. This “JCCP” involves multiple civil suits, including allegations from figures like Sacha Haworth of the Tech Oversight Project, who warns of “an industry that has enabled predators and addictors alike.” Plaintiffs accuse companies such as Snap, TikTok, and Google of negligent design that deliberately manipulates algorithms to maximize user engagement at the expense of minors’ well-being. Notably, TikTok and Snap have already settled, leaving Meta’s resistance to settlement as a focal point that could lead to unprecedented witness testimonies, revealing the inner mechanics of platforms built on “attention economy” strategies. This trial underscores a pivotal industry shift: regulators and courts are actively challenging a trajectory of innovation that borders on exploitation.

From a business perspective, these legal battles lay bare a critical truth for the tech sector: the cost of doing disruptive business is rising. Meta’s alleged complicity in enabling harmful content and exploitation illustrates how a relentless pursuit of growth and user engagement can clash with regulatory and moral boundaries. As Gartner analysts observe, such lawsuits serve as a “canary in the coal mine” — signaling that **the era of unchecked platform innovation without accountability is nearing its end**. The implications are clear: big tech firms must now balance innovation with compliance, or risk debilitating repercussions that could stifle future disruption. Ruthless market shifts demand that companies develop technology ecosystems more resilient to legal, ethical, and societal pushback—a call to arms for entrepreneurs and tech leaders eager to shape the future responsibly.

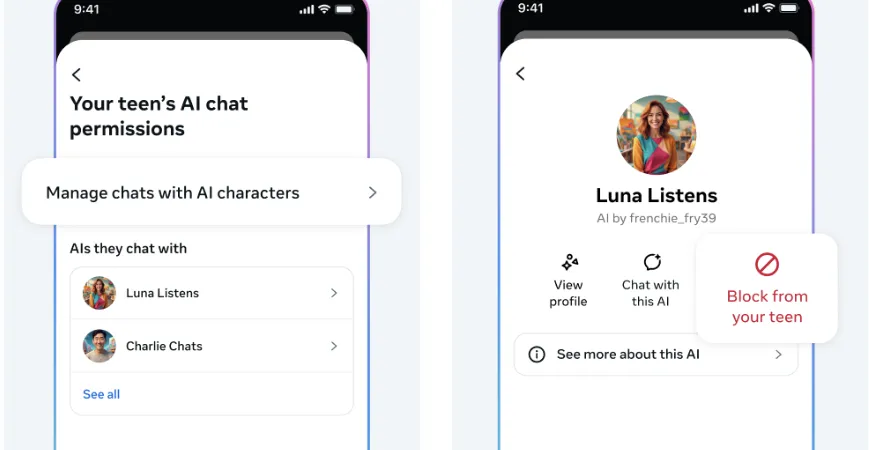

Looking ahead, the emerging legal landscape anticipates a fundamental reassessment of how social platforms innovate and monetize. As regulations tighten and consumer awareness grows, **the next wave of tech innovation will likely favor transparency, safety, and ethical design**. Industry titans have a limited window to pivot towards solutions that leverage breakthrough technologies such as AI-driven moderation, privacy-preserving algorithms, and robust user protections—integrating these into their core strategies to future-proof their business models. The ongoing trials symbolize a critical inflection point; failure to adapt could result in a “regulation tsunami” that disrupts traditional giants’ dominance. For entrepreneurs and investors targeting the next frontier of technology, the message is unmistakable: act swiftly, innovate with integrity, and prioritize societal benefit—because the future of tech is being rewritten today, and only the most visionary will thrive amid the disruption ahead.