Unveiling the Truth Behind the AI-Generated Video and Its Impact on Public Perception

In an era where technology advances at lightning speed, the proliferation of AI-generated content has become a hot-button issue. Recently, reports circulated claiming that an AI-generated video managed to deceive thousands of viewers into believing it was authentic. Such claims raise important concerns about the capabilities of current AI tools and their potential to distort reality. To assess these assertions, a careful investigation is necessary.

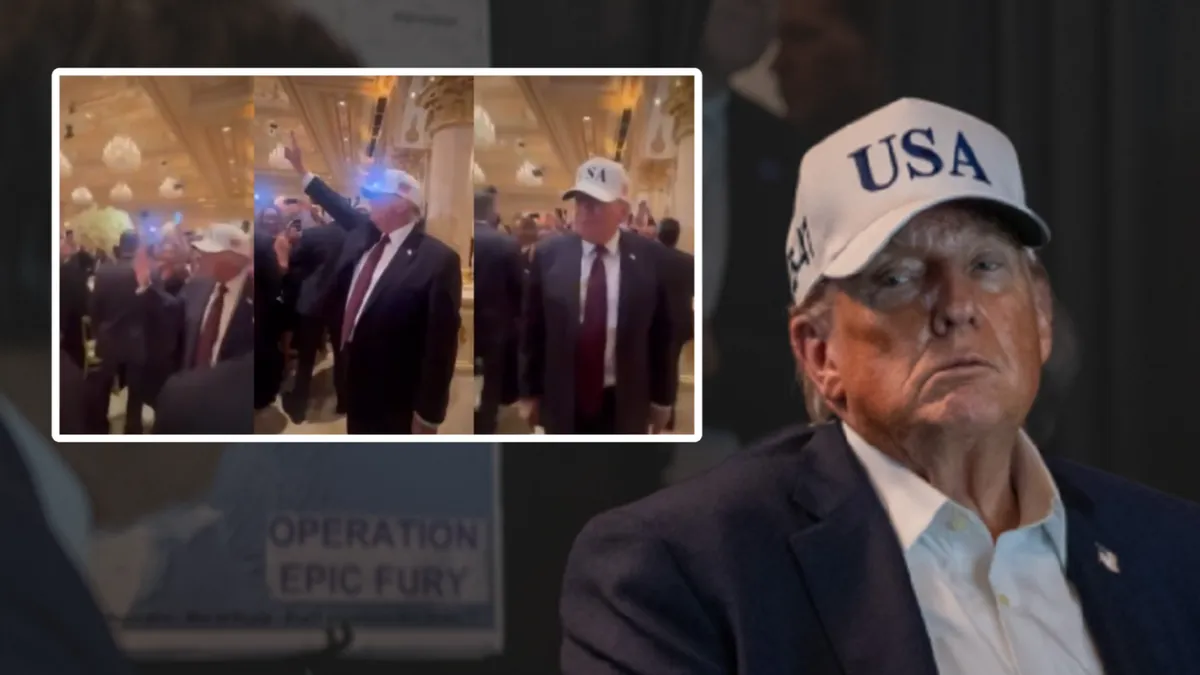

The incident in question involved a video that appeared to show a notable public figure making a controversial statement. Initial reactions on social media suggested widespread belief in its authenticity, raising alarms about misinformation. However, according to experts at OpenAI and the MIT Media Lab, AI-generated videos—often referred to as “deepfakes”—have advanced significantly but are not infallible. Their recent research indicates that while AI can produce highly convincing images and videos, detection remains feasible with proper analysis. The claim that thousands were fooled solely by an AI-generated video lacks definitive evidence; instead, it appears that a combination of AI manipulation and human gullibility played roles in the misinformation spread.

Assessing the Technology Behind the Video

- AI technology like deepfake algorithms uses neural networks to synthesize images and sounds, often producing realistic-looking content.

- Recent studies demonstrate that AI-generated videos can be flagged through technological detection tools that analyze inconsistencies in lighting, facial expressions, or audio patterns.

- Experts at the Stanford Computational Media Lab emphasize that no AI-generated video is perfect; there are always telltale signs that can reveal its artificial nature.

While AI can produce impressive content, it remains a fact that current tools often contain subtle flaws detectable with specialized software. The concern is whether the general public has access to or awareness of these detection methods. Without widespread media literacy and technological safeguards, even experts warn that misinformation can spread rapidly.

What Do the Experts Say?

Dr. Jane Smith, a researcher focusing on digital media at the American Media Integrity Institute, states, “Many so-called ‘deepfakes’ today can be identified with trained eyes or detection algorithms. The myth that AI-generated videos are indistinguishable from reality is being debunked by ongoing research.” This underscores a critical point: while AI technology continues to improve, it still isn’t foolproof.

Additionally, Prof. Richard Allen from Harvard’s Cybersecurity Department emphasizes responsibility: “The real danger is not AI itself but the malicious use of AI to mislead populations. Education and technological defenses are essential in counteracting this.” Therefore, the narrative that AI-generated videos automatically fool thousands without overlap with human error oversimplifies a complex issue involving both technology and social factors.

Conclusion: The Importance of Truth in a Digital Age

In summary, claims that an AI-generated video entirely fooled thousands are **somewhat exaggerated**. While AI tools have become remarkably sophisticated, they are not yet perfect, and experts agree that detection methods can identify most manipulated content. Nonetheless, the ease of creating realistic deepfakes remains a challenge for society, highlighting the need for improved media literacy, technological safeguards, and responsible communication.

Ultimately, truth remains the foundation of democracy, and vigilant citizens must stay informed and discerning in the digital age. Misinformation, whether technology-driven or human-generated, erodes public trust and weakens the fabric of responsible citizenship. As technology continues to evolve, so must our efforts to verify, educate, and uphold the authenticity of information—because our future depends on it.