Meta’s Latest Push into AI-Enhanced Camera Roll Features Sparks Industry-Wide Disruption

Meta continues to redefine the boundaries of artificial intelligence and user data integration with its latest feature rollout, raising significant questions about the future of data-driven innovation and digital privacy. Recently, the social media giant announced a new camera roll feature at Facebook that leverages AI to assist users in enhancing their photographs before posting. This development exemplifies disruption at the intersection of personal data and AI capabilities, offering both technical innovation and strategic market advantages that could reshape social media engagement.

Initially tested in June, the feature proposes to select media from users’ camera rolls and upload it to Meta’s cloud, ostensibly to generate creative suggestions. While Meta claims that private photos used solely for suggestions will not be used to train AI models unless explicitly authorized, industry experts such as Gartner analysts highlight that this transparency may be more perceived than actual. “The potential for future misuse or escalation in data harvesting practices remains a key concern,” warns Dr. Anne He, a prominent researcher in AI ethics and privacy. Today, Meta clarifies that media uploaded for suggestion purposes isn’t immediately used to improve AI, unless the user engages further—yet the underlying implication remains significant for industry-wide data policies.

Strategic Innovation and Industry Implications

Meta’s approach demonstrates a push for convenience-driven AI interfaces that blur the lines between personal privacy and technological convenience. As Meta trains its models on publicly available data since 2007, and potentially on user uploads in the future, industry leaders are recognizing the strategic value of this disruptive shift. The move positions Meta to lead the next wave of AI-powered content creation, aligning with the broader trend of companies leveraging user-generated data to fuel ever more sophisticated algorithms.

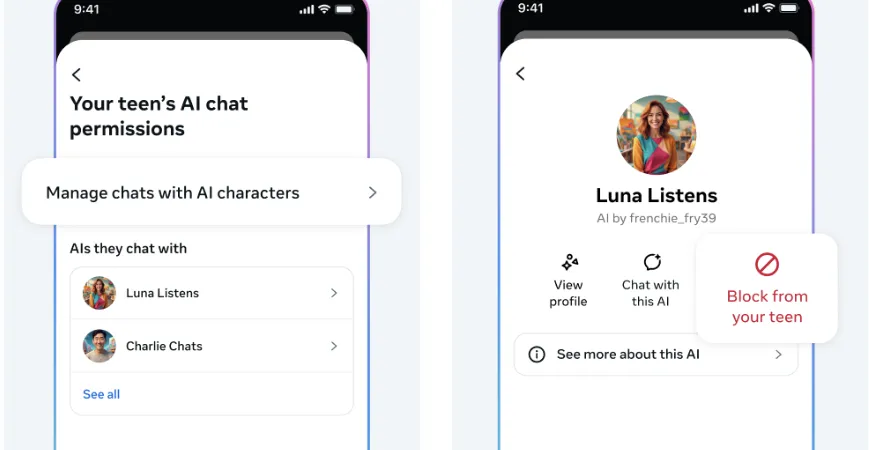

Furthermore, the company’s emphasis on avoiding advertising targeting using private media underscores a calculated attempt to mitigate backlash while maximizing data utilization for AI training. This tactical stance could set a precedent for industry standards, prompting rivals such as Snapchat or Twitter to accelerate similar innovations. The strategic deployment of AI-enhanced features like this signals a future where personalized, real-time content enhancement becomes a compelling differentiator in a crowded social landscape.

Disruption, Challenges, and the Road Ahead

The move marks a pivotal moment for digital innovation, yet it comes with significant challenges. Critics argue that any collection of private media for AI training could initiate a new era of privacy erosion, potentially undermining user trust. Industry insiders, including Elon Musk and Peter Thiel, warn that unchecked data aggregation could lead to unforeseen ethical dilemmas and regulatory crackdowns, ultimately disrupting long-term growth prospects for digital giants.

The core question remains: how will industry players balance cutting-edge innovation with user trust and regulatory compliance? As Meta advances in AI-driven content manipulation, the urgency for establishing clear ethical standards becomes evident. With the race to dominate AI-enabled social experiences intensifying, any hesitation or misstep risks falling behind in a market that is rapidly evolving beyond traditional boundaries. Looking forward, the convergence of AI, privacy, and business innovation will likely define the technology landscape for the next decade, requiring companies and regulators alike to act swiftly, decisively—and with vision.