SpaceX and Cursor Collab Signals a New Era in AI Innovation and Industry Disruption

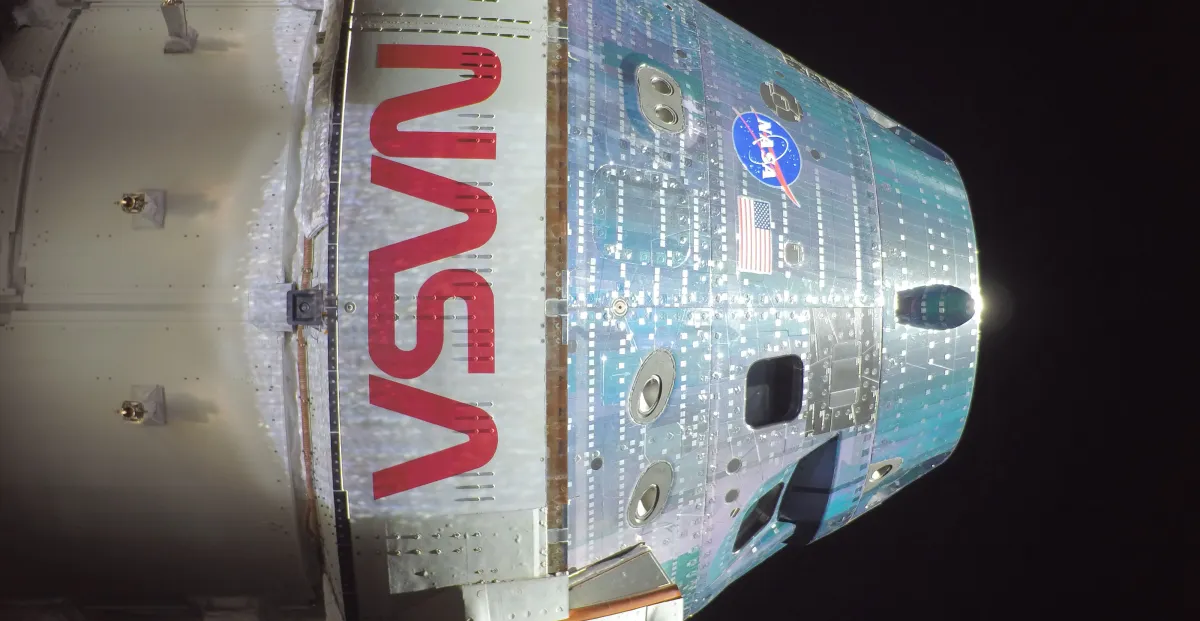

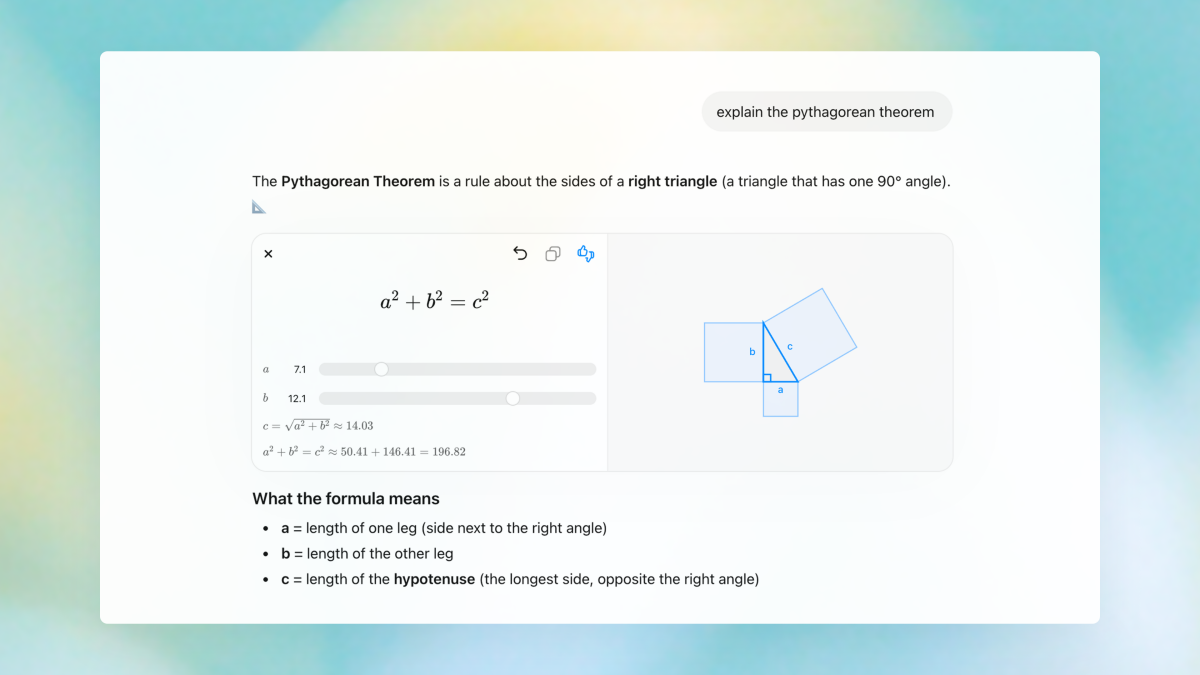

The alliance between SpaceX and Cursor marks a monumental shift in the landscape of artificial intelligence development, with significant implications for both technological progress and competitive advantage. This strategic partnership aims to combine Cursor’s cutting-edge knowledge work AI, renowned for its precision and efficiency among expert software engineers, with SpaceX’s formidable computational backbone—specifically its Colossus supercomputer, equipped with a million H100 equivalents. Such a synergy is set to revolutionize the creation of highly optimized AI models, positioning the collaboration at the forefront of innovation.

According to industry experts, including analysts at Gartner and MIT technology researchers, the use of vast computational resources—particularly H100 GPU clusters—will drastically accelerate the training of advanced AI models, pushing the boundaries of what is currently feasible. The partnership underscores a trend toward disruptive innovation—harnessing industry-scale supercomputing power for rapid deployment of AI that can dominate knowledge-based tasks, from coding to problem-solving. This level of integration exemplifies a new paradigm where the convergence of space-grade computing and AI expertise could set a blueprint for future tech dominance, compelling rivals to evaluate their own resource strategies.

Business Strategy and Industry Impact

The collaboration’s financial architecture is equally noteworthy. Cursor has granted SpaceX the right to acquire the AI firm later this year for $60 billion, or alternatively, SpaceX can choose to pay $10 billion for their collaborative developments. This dual pathway underscores an aggressive confidence in the commercial viability of the joint development efforts, signaling a strategic gamble that could reshape the AI market by consolidating innovation within a single tech giant. Such moves are reminiscent of divergence strategies seen in Elon Musk’s other ventures, with a focus on dominance and rapid scaling.

- Innovation: Deployment of millions of GPU cores for AI training, radically reducing development timelines.

- Disruption: Challenging traditional cloud-based AI models by leveraging space-grade supercomputing infrastructure.

- Business implications: Potential market consolidation, setting new valuation benchmarks for AI startups, and redefining enterprise AI usage.

As the AI arms race intensifies, industry insiders warn that this partnership could accelerate global shifts toward autonomous systems, intelligent coding assistants, and knowledge synthesis tools, supplanting many conventional software development paradigms. Given SpaceX’s track record of pushing technological frontiers—think Starship and Falcon programs—its foray into AI via Cursor elevates the urgency for competitors to innovate or face obsolescence. The partnership not only exemplifies how industry titans are deploying unprecedented resources but also foreshadows a future where AI becomes fundamentally intertwined with space-grade hardware.

Future Outlook: The Next Phase of Tech Disruption

With the collaboration underway, the industry must brace for a phase of rapid displacement and evolution. As Gartner analysts predict, the integration of supercomputing with knowledge work AI will unlock capabilities previously considered science fiction—transforming sectors like software development, scientific research, and even complex decision-making systems. The critical question for industry leaders remains: who will adapt quickly enough in this new landscape? The clock is ticking, and in the race for technological supremacy, those who leverage innovation and massive computational resources now will dictate the future’s winners and losers.

In conclusion, the SpaceX-Cursor partnership exemplifies a pivotal turning point in tech history—disrupting existing industry norms while setting a blistering pace for future breakthroughs. As this alliance advances, it will be imperative for stakeholders to stay vigilant, innovate relentlessly, and harness the potential of this disruptive wave before it reshapes the entire technological ecosystem.