Disinformation & AI-Generated Propaganda Reshape Global Narratives Amid Innovation Surge

The recent controversy surrounding former President Donald Trump and allegations about Iranian women’s executions demonstrates an evolving battlefield where technology, misinformation, and geopolitics collide. As social media becomes the primary conduit for real-time information, disruption in information authenticity is transforming how narratives are constructed, weaponized, and contested across the globe. Industry insiders and analysts like Gartner warn that AI-driven content manipulation is at the core of these modern propaganda wars, blurring the line between fact and fiction in unprecedented ways.

At the heart of this technological upheaval lies a surge in AI-powered tools capable of generating hyper-realistic images, videos, and narratives at scale. The controversy over a collage supposedly depicting “AI-generated women” facing execution in Iran exemplifies this shift. Mahsa Alimardani of WITNESS confirms that while the images may be AI-altered, the women depicted — including Bita Hemmati — are real, and many are victims of Iran’s brutal crackdown on dissent. This incident underscores a critical business implication: technologies that enhance content realism can be exploited for political gains, creating a new class of false narratives that threaten truth itself.

Innovation in Content Manipulation Fuels Geopolitical Disinformation

Industry leaders like Elon Musk and Peter Thiel have expressed concern about disruptive AI innovations that could overwhelm information ecosystems. Platforms laden with misinformation, such as the Iranian embassy’s social accounts, now leverage AI to craft content that is virtually indistinguishable from reality. Such tools enable actors to generate disinformation campaigns with increased sophistication and scale, giving rise to a dangerous landscape where fact-checking alone becomes insufficient.

More troubling is the proliferation of misleading political narratives. For instance, a South Korean president’s misquoted video, falsely attributed to a deceptive account, demonstrates how misinformation can escalate international tensions. This underscores a pressing need for robust verification mechanisms—an area where industry standards, like those promoted by MIT and other tech research institutions, are desperately needed but often lag behind rapidly evolving AI capabilities. The consequences are clear: if unchecked, disruptive AI content could undermine democratic institutions, intensify conflicts, and destabilize global peace.

The Business Implications & The Urgent Need for Strategic Response

From a business perspective, the rise of disruptive AI tools is both a challenge and an opportunity. Companies invested in blockchain, biometric verification, and AI content authentication are racing to develop solutions that can detect and counteract AI-mediated misinformation. According to Gartner, next-generation verification platforms will become essential infrastructure for social media platforms, governments, and corporations to safeguard trust in digital content. Failure to innovate at scale could result in losing consumer confidence and regulatory crackdowns, echoing the importance of strategic foresight in a landscape fraught with emerging threats and market shifts.

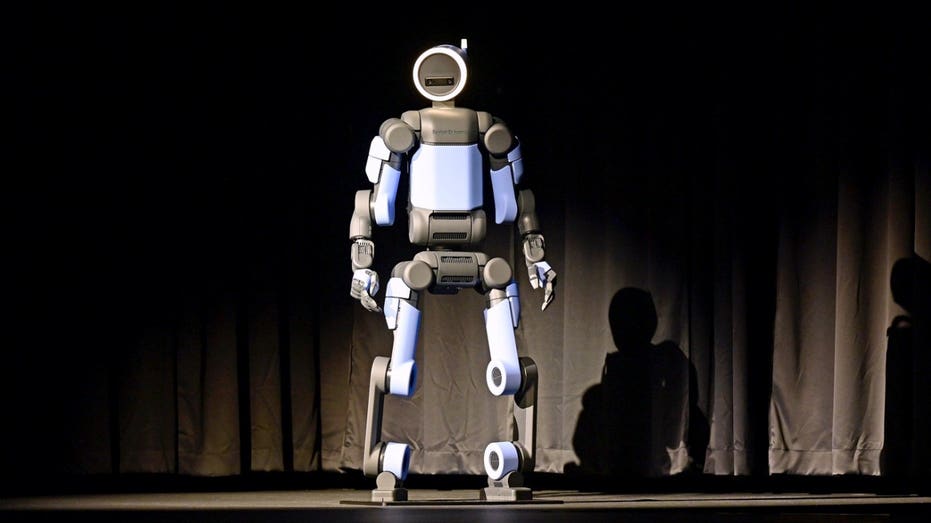

Furthermore, industry analysts warn that the pace of AI innovation necessitates bold leadership and proactive regulation. Like the groundbreaking developments in autonomous systems and neural interfaces, AI content creation is poised to redefine the information economy. Yet, as industry experts note, without robust guardrails—founded on transparency, accountability, and technological innovation—these systems risk unleashing chaos rather than progress. Fast-moving startups and global tech giants must collaborate to develop standards that ensure fact-based content remains dominant and trusted in the digital age.

Looking Forward: The Urgency of Strategic Innovation

The unfolding landscape of AI-driven disinformation presents a make-or-break moment for industry and policymakers alike. The stakes are high: failure to keep pace with disruptive technologies may lead to irreparable damage to the fabric of truth and societal stability. Whether through advanced verification systems, AI content filters, or international cooperation, the imperative remains clear: innovation must be matched with strategic foresight and unwavering commitment to integrity. As tomorrow’s technological landscape continues to evolve rapidly, those who act decisively today will determine the future of truth in the digital age—and the future of free discourse itself.